In recent years, the rise of artificial intelligence (AI) has brought about numerous advancements in various fields. However, one aspect of AI that has raised concerns is its capacity to generate deepfake images. While some of these images may provide amusement, such as Arnold Schwarzenegger’s face superimposed on Clint Eastwood’s Dirty Harry, there is a darker side to this technology.

The Dark Side of Deepfakes

Deepfake technology has been increasingly used in malicious ways. Just last week, actor Tom Hanks had to denounce an ad that used his AI-generated likeness to promote a dental health plan. Even popular YouTubers like Mr. Beast have fallen victim to deepfakes, with videos falsely showing them offering expensive products at low prices. Additionally, ordinary citizens have had their faces appear in fabricated images on social media without their consent.

Perhaps the most disturbing aspect of deepfake images is their use in “revenge porn.” Jilted lovers are now able to post fabricated images of their former partners in compromising or obscene positions. This not only causes emotional distress but also raises serious privacy concerns.

As the United States prepares for the highly contentious 2024 presidential election, the threat of deepfake imagery and videos promises an election of unprecedented ugliness. The rise of forged imagery and videos could have a significant impact on the political landscape, causing widespread confusion and misinformation.

Furthermore, the proliferation of fake images can also have a detrimental effect on the legal system. Lawyers are increasingly challenging evidence produced in court, taking advantage of a bewildered public unsure of what is true or false. The classic liar’s dividend is now a reality, with anyone able to deny reality with the help of deepfake technology.

The Ineffectiveness of Watermarking

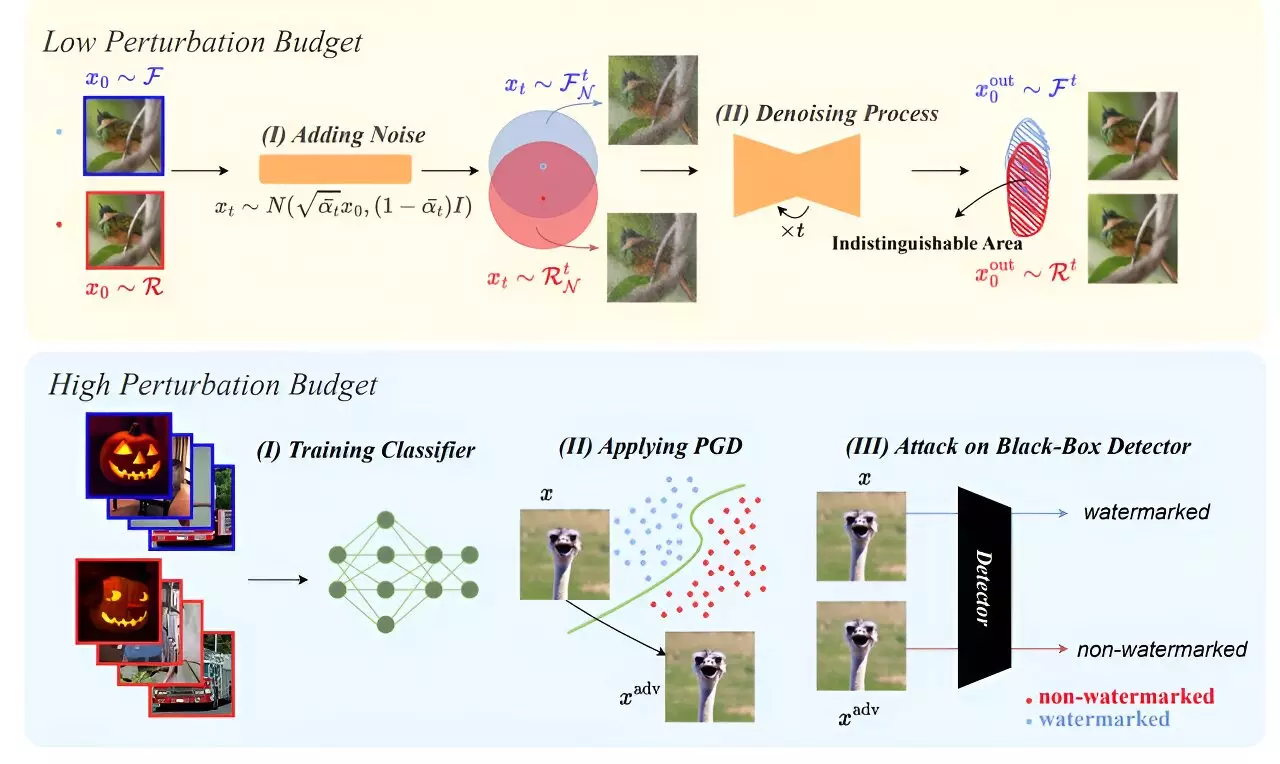

In an effort to combat disinformation, major digital media companies have promised to develop tools to detect and prevent the spread of deepfakes. One approach that has been widely discussed is the use of watermarking on AI-generated content. However, a recent paper published by professors at the University of Maryland raises doubts about the effectiveness of this method.

The research conducted at the University of Maryland revealed that currently available watermarking techniques can easily be bypassed. The authors of the paper successfully tested various run-arounds of protective watermarks, breaking all of them. This calls into question the reliability of watermarking as a defense against deepfake abuse.

The Urgent Need to Address the Challenge

The implications of AI-generated deepfake content are far-reaching. The misapplication of this technology introduces hazards related to misinformation, fraud, and even national security issues like election manipulation. Deepfakes can cause personal harm, including character defamation and emotional distress, impacting both individuals and society as a whole.

To address this growing challenge, the development of robust watermarking algorithms is crucial. However, designing a watermark that is immune to attacks is a challenging task. The cat-and-mouse game between attackers and defenders will continue, with the bad guys constantly working to break the defenses created by the good guys.

In the meantime, individuals must exercise vigilance and perform due diligence when reviewing images containing potentially important content. Double-checking sources, verifying information, and relying on common sense are essential in our digital age, where deepfakes pose a significant threat to trust, privacy, and democracy.

While the current state of deepfake detection may seem worrisome, there is optimism for the future. Advances in AI technology will likely lead to better detection algorithms that can effectively combat deepfakes. It is essential for researchers and industry experts to continue working together to stay one step ahead of malicious actors and protect society from the harmful effects of deepfake images.

The rise of deepfake images brings with it a growing threat to privacy, trust, and democracy. It is crucial for individuals, media companies, and legal systems to adapt and develop effective countermeasures to combat the spread of deepfake content. Only through a comprehensive approach can we ensure a safer digital landscape for all.

Leave a Reply