Artificial intelligence (AI) has become an increasingly important field of research, with applications in various industries. However, one common challenge in developing AI models is the presence of biases in the data. These biases can affect the performance and accuracy of the models, limiting their practical use. Professor Sang-hyun Park and his research team at Daegu Gyeongbuk Institute of Science and Technology (DGIST) have recently developed a novel image translation model that effectively reduces biases in data, leading to improved image analysis performance.

When training deep learning models using datasets collected from multiple sources, biases in the data can arise due to various factors. For example, when creating a dataset to distinguish between bacterial pneumonia and COVID-19, the conditions under which the images are collected may vary. These variations can result in subtle differences in the images, causing the models to focus on irrelevant features instead of critical characteristics for disease identification. As a result, the models exhibit high performance on the training data but struggle to effectively generalize to new data, leading to over-fitting issues.

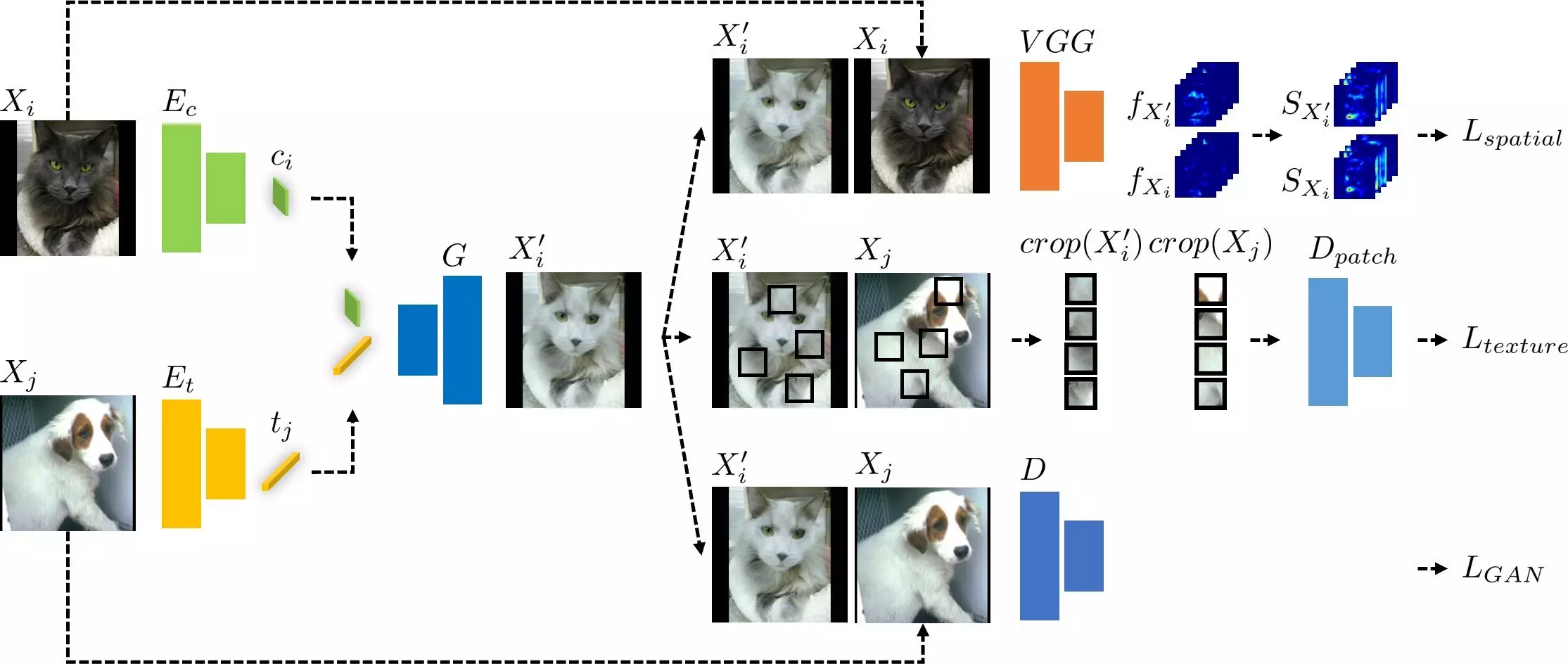

To address the challenges of biases in data, Professor Park’s research team developed an image translation model that utilizes texture debiasing techniques. Existing image translation models often encounter difficulties when dealing with texture changes, as textures and contents are intertwined. To overcome this limitation, the team proposed a model that incorporates error functions for both textures and contents simultaneously. This model extracts information on the contents of an input image and textures from a different domain and combines them to generate an image that maintains the content information while adopting the texture of the new domain.

The developed deep learning model demonstrates superior performance compared to existing debiasing and image translation techniques. It outperforms existing methods when applied to datasets with texture biases, such as classification datasets for distinguishing numbers or different hair colors of dogs and cats. Additionally, it also shows excellent performance on datasets with various biases, including multi-label numbers and different types of images like photos, animations, and sketches. Furthermore, the image translation technology proposed by Professor Park’s team can be implemented in image manipulation, where it alters only the textures of an image while preserving the original contents, surpassing existing image manipulation methods.

The developed image translation model opens up new possibilities in various industries. In the field of self-driving, the model can help overcome biases in the training data, leading to more accurate detection and recognition of objects on the road. In content creation, the model can be used to generate high-quality images with different textures, providing artists and designers with more creative options. In medicine, the model’s ability to reduce biases in disease identification datasets can improve the accuracy of diagnosis and treatment.

Professor Sang-hyun Park and his research team at DGIST have made significant advancements in addressing biases in data with their new image translation model. By incorporating texture debiasing techniques and error functions for both textures and contents, the model outperforms existing methods and shows promise for various applications. This breakthrough innovation has the potential to advance AI technologies, particularly in the fields of self-driving, content creation, and medicine. As researchers continue to refine and expand on this model, we can expect further improvements in AI’s ability to handle biases and increase its overall performance.

Leave a Reply